The debate around traditional dubbing vs AI is no longer theoretical – it’s operational.

The debate around traditional dubbing vs AI is no longer theoretical – it’s operational.

The debate around traditional dubbing vs AI is no longer theoretical – it’s operational. Studios are scaling multilingual libraries. Course creators are reaching international audiences. Streaming platforms are competing on release windows across LATAM, MENA, and Southeast Asia simultaneously. The bottleneck in every case is the same: localization workflows built for single-language, single-episode production that can’t hold up when the volume multiplies.

Traditional dubbing was never designed for that kind of scale. AI dubbing was. And within AI dubbing, the gap between platforms is growing – not in whether they can dub, but in whether they can dub accurately, consistently, and at the speed global distribution actually demands.

This article compares traditional dubbing vs AI across four dimensions: cost per minute, production timelines, scalability across series, and long-term operational efficiency – and examines where Echo9 specifically changes the equation.

Traditional dubbing is a studio-driven, sequential process with multiple handoff points – each one a potential delay. Understanding the full workflow explains why it struggles to scale.

A single episode moves through script translation and cultural adaptation, voice actor casting, studio session scheduling, segmented dialogue recording, audio editing and lip-sync alignment, and at least one round of quality review and corrections before anything is approved. Every stage depends on the previous one completing first. There is no parallel execution.

Related: Media Localization vs Translation

Voice actors must align availability across time zones. Studios must allocate recording time that fits multiple actors’ schedules. Editors must manually match lip movement and pacing frame by frame. When revisions come back – and they do – the process re-enters the queue from the editing stage. For a single 45-minute episode, this cycle typically takes days at minimum and weeks at the outer edge depending on language pair, talent availability, and revision rounds.

Multiply that by a 20-episode season across three languages and the timeline compounds before a single frame reaches an international audience. Each language runs its own independent production track. There is no shared infrastructure between them. Coordination overhead grows with every additional market added, and that overhead is paid in full for every new season produced.

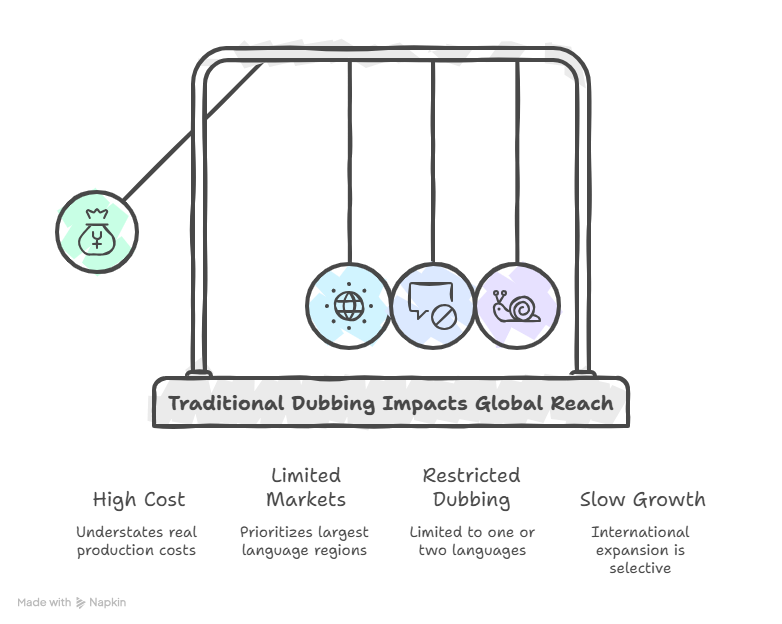

Cost is where the traditional model first starts to break. The headline rate per minute understates the real number once the full production picture is factored in.

Industry rates for traditional dubbing typically range between $80 and $250 per minute. That baseline reflects studio time and voice talent. What pushes it higher: revisions that require re-booking studio sessions, re-recording segments when tone or pacing doesn’t land, premium rates for well-known voice talent, and coordination fees when production spans multiple vendors or time zones. The revision cycle is particularly expensive because in traditional workflows, fixing a problem means restarting a scheduling chain.

For a 10-episode season at 45 minutes per episode – 450 minutes total – the numbers become difficult to work with quickly. At a modest average of $120 per minute, one language costs approximately $54,000. Add three languages, which is table stakes for any platform pursuing LATAM, MENA, or European expansion simultaneously, and total costs exceed $150,000 for a single season.

These economics force a structural compromise. Content owners end up prioritizing only the largest markets because secondary regions don’t justify the per-minute cost. Dubbing gets restricted to one or two languages. Global reach becomes limited not by ambition but by budget. Traditional dubbing economics, in practice, make international growth a slow and selective process.

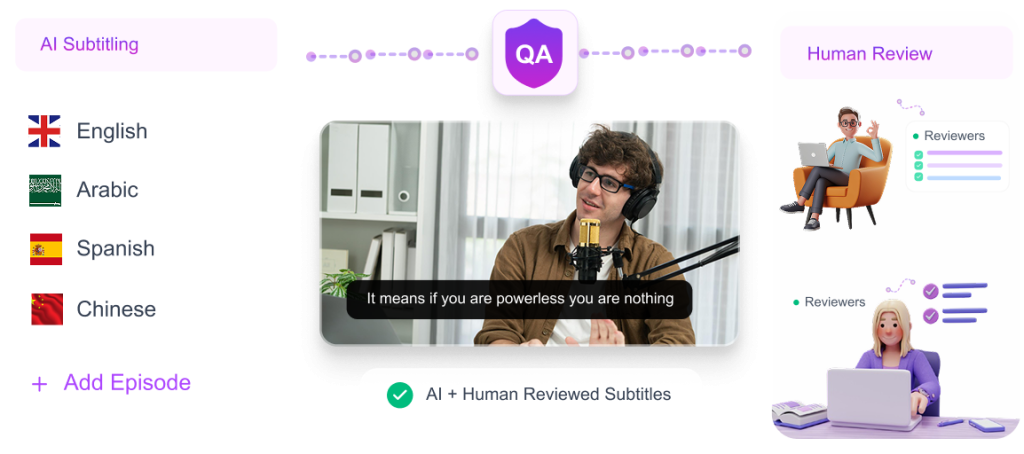

AI dubbing replaces the studio dependency with automated, parallelizable workflows – and the operational implications of that shift are larger than they first appear.

The production chain runs through automated transcription, machine translation with human editorial review, AI voice generation, synchronization and alignment, and structured quality assurance. None of these stages require studio availability or actor scheduling. Processing runs on demand. Multiple episodes can be handled simultaneously. Multiple languages can run in parallel.

Check how AI is transforming Video Localization

In traditional dubbing, adding a language adds a full production track with its own sequential timeline. In AI dubbing, adding a language adds compute time, not human coordination time. The difference in scaling behavior is fundamental. A 10-episode season in one language and a 10-episode season in five languages don’t require five times the wall-clock time – they can run in parallel and complete within a comparable window.

AI handles speed and volume. Human editors in Echo9’s workflow handle what AI can’t fully automate: cultural validation, tone calibration, terminology governance, and final quality sign-off. The pipeline is not fully autonomous – it’s designed to be fast at the stages that don’t require judgment and careful at the stages that do. That distinction matters for accuracy, which is covered in more detail below.

The per-minute cost gap between traditional and AI dubbing is significant at the unit level. At scale across a content library, it becomes a strategic difference.

AI dubbing on most platforms falls between $10 and $40 per minute. Echo9’s pricing reflects the platform’s production infrastructure – not just voice generation, but synchronization, quality review tooling, and Series Management. Using a conservative $25 per minute, the same 450-minute season that cost $54,000 per language in traditional workflows costs approximately $11,250 – a reduction of nearly 80 percent.

The more important question isn’t how much is saved per season – it’s what becomes possible when those savings compound. A content owner that previously could afford to dub one season in two languages can now dub three seasons in five languages within the same budget. Markets that were economically out of reach become viable. Expansion decisions stop being constrained by localization economics and start being driven by audience opportunity.

| Factor | Traditional Dubbing | AI Dubbing (Echo9) |

|---|---|---|

| Cost per minute | $80–$250 | Significantly lower |

| Timeline (10-ep season) | 5–8 weeks per language | 2–5 days |

| Scalability | Linear, expensive | Parallel, efficient |

| Revision cost | Expensive, re-schedules studio | Fast, in-platform |

| Voice accuracy | Talent-dependent | Neural model + human review |

| Series consistency | Manual, varies by season | Locked via Series Management |

Speed is where the operational gap between traditional and AI dubbing is most visible – and most consequential for platforms competing on release windows.

For a 10-episode season in a single language, a traditional dubbing workflow typically looks like this: script preparation takes one to two weeks, casting and scheduling takes another week, recording runs two to four weeks depending on actor and studio availability, and post-production and revisions add one to two weeks on top. That’s five to eight weeks minimum per language – before anything reaches a viewer. For three languages running sequentially, the pre-launch localization period extends to months.

With Echo9’s AI-driven workflow, transcription and translation takes hours to a few days depending on episode count. Voice generation for a full episode runs in hours. Batch processing across all episodes in a season runs in parallel. A full 10-episode season in one language typically completes in two to five days. Multiple languages run simultaneously without extending that window proportionally.

For streaming platforms, this isn’t a production efficiency story – it’s a revenue story. Simultaneous global release across markets drives higher opening-period engagement and eliminates the window in which audiences in secondary markets encounter spoilers and lose interest. The ability to localize a full season in days rather than weeks means a show can launch globally on its intended date instead of in phases.

Accuracy is the objection most commonly raised against AI dubbing, and it deserves a direct answer. The question isn’t whether early AI dubbing had accuracy limitations – it did. The question is whether modern neural voice systems, combined with structured human oversight, produce output that meets production standards. For Echo9, the answer is yes across three dimensions.

Neural text-to-speech models in Echo9 are trained on extensive natural speech data to replicate not just words but delivery – the pauses, emphasis shifts, and tonal variation that carry emotional meaning. A line of dialogue that should land with urgency is generated with urgency. A scene that requires restraint is rendered with restrained delivery. These aren’t post-hoc adjustments applied uniformly; they’re generated contextually based on the speech model’s understanding of the content.

Pronunciation accuracy in AI dubbing depends on how well the underlying model understands the content’s domain and context. Echo9’s voice generation handles technical terminology, proper nouns, brand names, and language-specific pronunciation patterns through a combination of model training and the platform’s centralized terminology database. Consistent pronunciation across every episode in a series is managed automatically – not reviewed manually episode by episode.

Echo9 doesn’t treat quality assurance as a final check – it’s built into the workflow as an active layer. Editors can review synchronization alignment at scale, edit translations directly within the platform, flag terminology inconsistencies against the series database, and collaborate across review stages without switching tools. The output of AI generation is the input to a structured human review process, not the final product. That layered approach is what makes production-grade accuracy achievable at AI speed.

Consistency across episodes is a cost that rarely gets calculated upfront in traditional dubbing – and it compounds over time in ways that are expensive to fix.

In traditional workflows, consistency depends entirely on continuity of people. When a voice actor becomes unavailable between seasons, a replacement is cast who sounds different. When a new translator takes over a project, terminology shifts subtly. When tone guidelines aren’t documented, different directors interpret emotional scenes differently. None of these are failures of craft – they’re structural vulnerabilities of a workflow that relies on human continuity across a production that may span years.

For long-running dramas, animated series, or structured e-learning programs, inconsistency across episodes creates a fragmented audience experience. Viewers notice when a character’s voice changes between seasons even if they can’t articulate why. Learners in a course notice when an instructor’s voice inflection shifts across modules. These inconsistencies don’t just affect perception of the localization – they affect perception of the content itself.

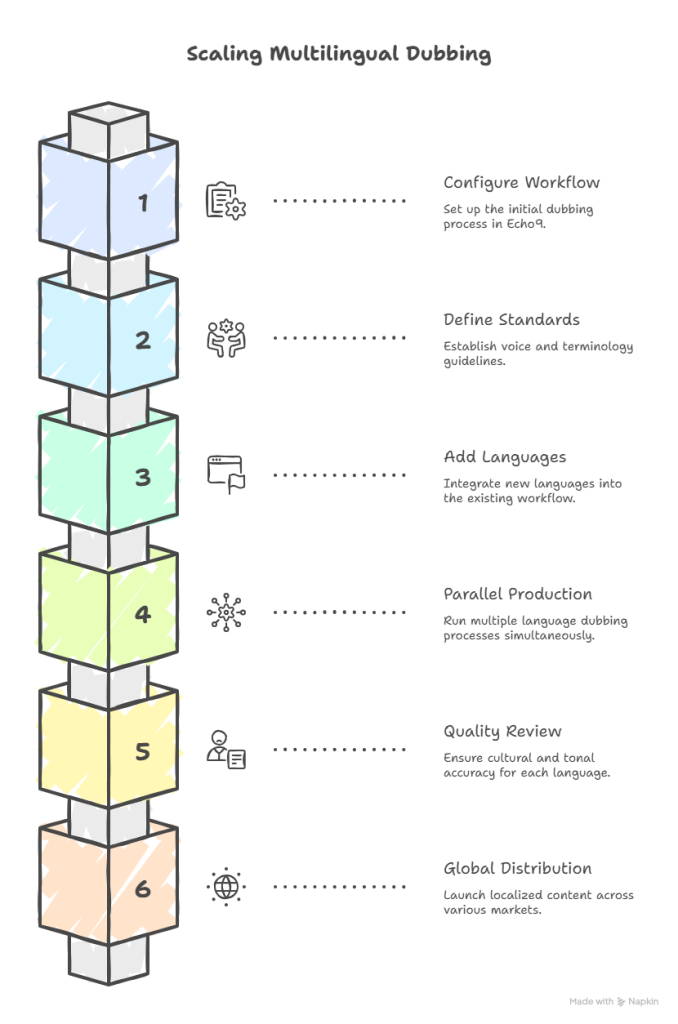

Series Management is the structural feature that separates Echo9 from general-purpose AI dubbing tools. It was built specifically for long-form content where consistency across episodes is not optional.

Teams define voice standards, terminology guidelines, and style frameworks at the beginning of a project. Those standards are stored in Echo9’s centralized infrastructure and applied automatically to every subsequent episode. Character voice profiles are locked using voice cloning – the same tonal profile, the same delivery characteristics, the same emotional range – applied consistently whether the episode was produced in week one or month eighteen.

Series Management locks the elements that must stay consistent: character voices, terminology, brand language, and approved translation patterns. It keeps flexible the elements that should vary: scene-specific tone, pacing adjustments for different content types, and language-specific adaptation choices made by human editors. The result is consistency where consistency matters and flexibility where flexibility improves quality.

Instead of rebuilding context for every new episode or resetting workflow standards at the start of every new season, production builds on a documented foundation. New editors onboard faster. QA cycles are shorter because the standards are defined and enforced by the system. Season two doesn’t require the same ramp-up as season one. That efficiency accumulates across every episode added to the library.

Consumer preference for native-language content is consistent across markets – engagement rates, watch time, and audience retention all increase with properly localized content. The operational question is how to act on that preference at volume without rebuilding infrastructure for each new language.

Traditional dubbing scales linearly and expensively. Each additional language is an independent production track: separate casting, separate studio scheduling, separate editing, separate review cycles. The tenth language costs roughly as much to produce as the first. There is no infrastructure reuse, no shared terminology governance, no accumulated efficiency from having produced the first nine.

Once a series workflow is configured in Echo9, adding additional languages is a repeatable process. Voice and terminology standards defined for the first language carry over into every subsequent one. The same Series Management framework governs consistency across all language versions simultaneously. Echo9 supports AI dubbing in over 100 languages, and the workflow doesn’t require reconstruction for each new market – it extends.

A streaming platform expanding from English into Spanish, Arabic, and Indonesian doesn’t run three separate production timelines. All three run in parallel within the same Echo9 workflow, governed by the same Series Management standards. Each language version undergoes its own human quality review pass for cultural and tonal accuracy, but the foundational infrastructure – voice profiles, terminology, synchronization standards – is shared. Distribution scales without operational disruption.

The economics and operational reality of traditional dubbing vs AI aren’t close at scale. Traditional dubbing is well-suited for premium, performance-driven productions where time and budget are secondary considerations. For digital content distribution at volume – where speed to market, cost per language, and consistency across episodes all directly affect revenue – AI dubbing has become the operationally viable choice.

Echo9 advances that argument further with three specific advantages: accuracy through neural voice generation and structured human quality review, speed through parallel processing and on-demand workflows, and consistency through Series Management that governs voice profiles, terminology, and production standards across every episode and language simultaneously. Localization stops being a post-production bottleneck and becomes a repeatable, scalable part of a global distribution strategy.

Ready to reduce dubbing costs and scale multilingual releases faster? Visit Echo9.ai to explore a smarter approach to localization.

1. Is AI dubbing cheaper than traditional dubbing?

Yes, significantly. Traditional studio-based workflows carry costs of $80 to $250 per minute once recording sessions, multiple vendors, and revision cycles are factored in. AI dubbing through Echo9 costs a fraction of that, representing a 60 to 80 percent reduction depending on content type and language pair.

2. How fast is AI dubbing compared to traditional dubbing?

Traditional dubbing for a 10-episode season typically takes five to eight weeks per language. Echo9 processes the same season in two to five days through automated transcription, parallel voice generation, and batch episode processing. For platforms competing on simultaneous global release, that speed gap determines whether a show launches across all markets on day one or rolls out in phases over months.

3. Does AI dubbing maintain voice consistency across episodes?

With Echo9’s Series Management, yes. Voice cloning locks consistent character identity throughout a series. Character profiles don’t drift between seasons when talent becomes unavailable, because the voice standard is stored in the platform rather than residing with a specific actor.

4. How accurate is AI dubbing for emotional scenes?

Echo9’s neural voice models replicate emotional tone, pacing, and contextual emphasis with significantly more accuracy than earlier AI systems. Emotional delivery is generated contextually – urgency, restraint, humor, and grief are reflected in the voice output rather than applied uniformly.

5. Is traditional dubbing becoming obsolete?

Not for high-performance productions where live studio nuance and specific talent are part of the creative brief. But for digital content distribution at scale, the market has shifted decisively. Speed, cost efficiency, and multilingual scalability now outweigh the operational overhead of studio-based workflows.

6. How many languages can Echo9 support?

Echo9 supports AI dubbing in over 100 languages, with voice and terminology standards maintained across every language version through Series Management. Adding a new language to an existing series workflow does not require rebuilding production infrastructure from scratch.