Global video consumption has expanded faster than ever. Streaming platforms release content across continents simultaneously.

Global video consumption has expanded faster than ever. Streaming platforms release content across continents simultaneously.

Global video consumption has expanded faster than ever.

Streaming platforms release content across continents simultaneously. YouTube creators build audiences in multiple regions. E-learning companies scale courses internationally.

But one challenge remains constant: maintaining voice identity across languages.

Subtitles help.

Traditional dubbing works.

But neither preserves the original speaker’s vocal identity.

This is where voice cloning becomes essential.

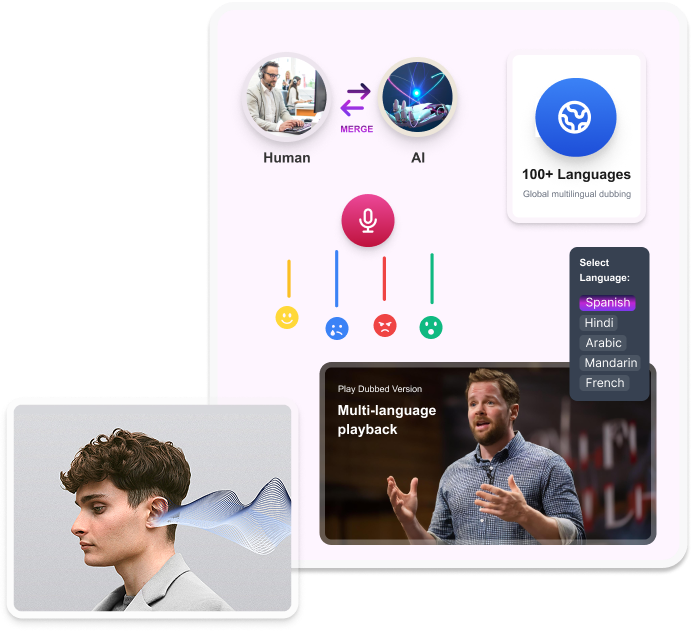

Voice cloning allows AI systems to replicate a specific speaker’s tone, rhythm, and vocal personality. When applied to AI voice dubbings, it enables localized dialogue that sounds like the same character, even in another language.

In modern video localization, voice cloning is no longer experimental. It is becoming foundational.

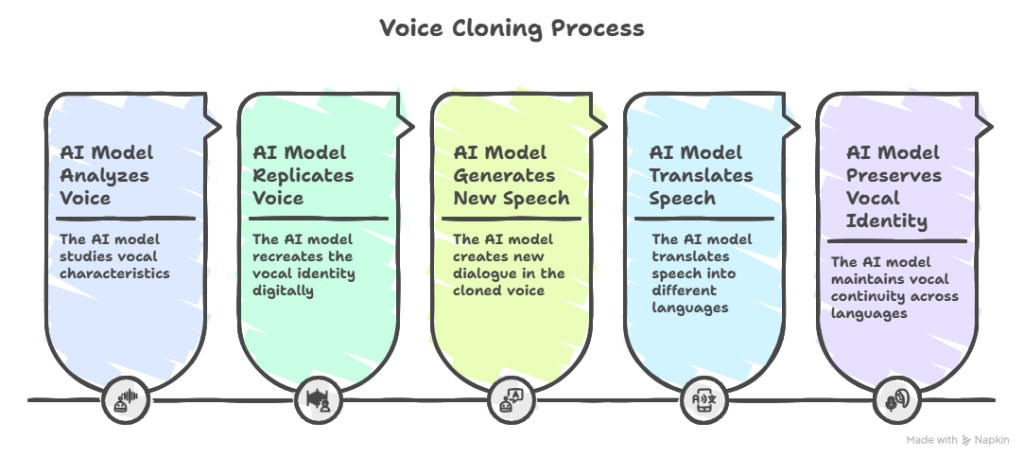

Voice cloning is an artificial intelligence process that learns and replicates a specific human voice. Instead of generating a generic synthetic sound, AI models analyze how a particular person speaks and recreate that vocal identity digitally.

To do this, the system studies characteristics such as:

But voice cloning goes deeper than surface-level sound. It captures subtle elements like pacing between words, how emphasis is placed on certain syllables, how emotions shift within a sentence, and even natural breathing patterns.

Once trained, the AI can generate entirely new speech in that same voice, even if the original speaker never recorded those exact lines. The cloned voice does not replay existing audio; it synthesizes new dialogue while maintaining the speaker’s vocal identity.

In the context of dubbing, this capability becomes transformative.

It means that a character speaking English can sound like themselves in Spanish, Arabic, Mandarin, or any other supported language. Instead of replacing the original performance with a completely different voice actor, voice cloning preserves vocal continuity across languages.

This is particularly important for:

The goal of voice cloning is not imitation for novelty or mimicry.

The goal is identity preservation.

It ensures that when audiences switch languages, they do not lose connection to the character, narrator, or brand voice they recognize.

When used responsibly and with proper consent, voice cloning becomes a powerful tool for maintaining narrative integrity at global scale.

The process of voice cloning relies on three key technologies:

While the underlying technology is complex, the workflow follows a clear structure.

First, clean voice samples are collected. These samples are usually a few minutes long. They capture the speaker’s natural speaking style.. The quality of these samples directly affects the output.

Next, the AI model analyzes vocal features. This includes pitch variation, phoneme transitions, rhythm, and emotional cues.. This creates a voice profile that can be reused consistently.

Once the voice profile exists, new text can be fed into the system. The AI generates speech that mirrors the cloned voice’s characteristics. It also matches the phonetics and pacing of the target language.

In AI voice dubbing, the synthesized speech is synced with the video. It matches the timing, scene context, and emotional delivery.. The final result is reviewed, adjusted, and approved before release.

Dubbing is not just about translating words. It is about carrying intent, emotion, and identity across cultures. Voice plays a central role in how audiences connect with content.

Without voice cloning, a character’s voice can change between languages. It may even vary between episodes. This can reduce immersion. It’s especially noticeable in serialized content such as TV shows, documentaries, or courses

Voice cloning enables:

These benefits show why AI voice dubbing is widely used. Streaming platforms, content creators, and corporate teams all rely on it.

Read more: What is video dubbing

One of the biggest challenges in AI dubbing is consistency. This applies across multiple episodes, videos, or modules.. This is where most generic tools fall short.

Echo9 solves this with Series Management. It’s designed for long-form and recurring content.

Series Management allows teams to:

Instead of treating each video as separate, Echo9 looks at content as part of a bigger story. This is critical for TV series, podcasts, training libraries, and serialized YouTube channels.

Echo9 integrates voice cloning directly into its AI dubbing workflow. The process begins with voice selection or approved voice cloning. The choice depends on the project’s requirements.

Once voices are assigned, Echo9 applies them consistently across all related content. Dialogue is generated with attention to pacing, emotional tone, and timing alignment. Subtitles, dubbing, and translations are managed within the same platform.

Quality assurance is built into the workflow. Teams can review pronunciation, timing, and delivery before final output. This reduces rework and ensures production-level results.

Echo9’s approach focuses on repeatability and governance, not just one-off outputs.

Voice cloning is not limited to entertainment. Its applications span multiple industries.

Streaming platforms use it to localize shows while preserving character identity. E-learning providers rely on it to keep instructor voices consistent across languages. Brands use it to maintain a recognizable tone in global campaigns. Podcast networks apply it to expand reach without recasting hosts.

In all these cases, AI voice dubbings reduce friction while improving audience experience.

Voice cloning will keep improving in emotional range, natural pacing, and language adaptability. However, the technology alone is not enough.

The real differentiator will be the platforms you choose. They manage consistency, quality, and governance at scale. As content libraries grow, tools like Echo9 stand out. They treat dubbing as a system rather than a feature that will define industry standards.

Echo9 is not a generic text-to-speech tool. It is a localization platform built for structured, repeatable content workflows.

Echo9 combines voice cloning, AI dubbing, subtitles, and Series Management. This helps teams localize content while keeping identity and control. This makes it suitable for professional studios, media teams, and global brands.

Voice Cloning is the future of Voice Replication

Echo9 is built to support scalable voice cloning and AI dubbing across media, entertainment, and training content. It combines AI-powered speed with structured Series Management to maintain consistency in voices, terminology, and quality across episodes and languages.

Whether you’re localizing a drama series, educational program, or corporate training library, Echo9 simplifies multilingual production without fragmenting your workflow. Subtitles and dubbing can be managed together; aligned, version-controlled, and production-ready.

If you’re exploring voice cloning or AI dubbing at scale, Echo9 provides both the infrastructure and governance to do it responsibly.

Explore Echo9’s capabilities in AI dubbing and voice replication. Or book a demo to see how it integrates seamlessly into your existing localization and content operations.

Voice cloning is an AI technology that learns how a specific person sounds by analyzing their pitch, tone, accent, and speech patterns. It then recreates that voice digitally so it can speak new words that were never originally recorded.

No. Traditional text-to-speech generates generic synthetic voices that are not tied to a real individual. Voice cloning, on the other hand, replicates the unique vocal identity of a specific speaker, making the output more personalized and realistic.

Voice cloning allows a character or narrator to sound consistent across different languages. In dubbing, this means the Spanish, Arabic, or French version of a show can still feel like the same character, preserving identity across episodes.

Yes, when it is used with proper consent and clearly defined usage rights. Ethical implementation includes documented permissions, contractual safeguards, and clear boundaries on how the replicated voice can be used.

Modern systems can replicate emotional tones such as excitement, sadness, or urgency with increasing accuracy. However, human review and quality assurance are still important to ensure emotional authenticity in high-impact scenes.

Yes. Echo9’s Series Management framework is designed specifically for multi-episode and serialized content. It maintains consistency in voices, terminology, and workflow across entire seasons.

Yes. Echo9 integrates subtitling and AI voice dubbing within a unified workflow. This ensures alignment between translated scripts, timing, and voice outputs, reducing fragmentation across tools.