📅 Mar 2, 2026

Video content is no longer limited by geography.

A film released in Pakistan can trend in Latin America. An online course recorded in English can reach learners in Europe and Southeast Asia. A YouTube creator can build an international audience in months.

But one barrier remains constant: language.

This is where video dubbing becomes essential.

Dubbing allows content creators and companies to replace the original spoken audio with a translated voice track. Instead of reading subtitles, audiences hear content in their own language, naturally and immersively.

In recent years, AI dubbing has transformed this process. What once required studios, voice actors, and weeks of production can now be executed faster, more affordably, and at scale.

What Is Dubbing and How it Works?

Video dubbing involves recording spoken audio in a target language. That audio is then carefully synchronized to match the original video. The goal is to make the content feel natural to viewers who do not understand the original language.

Instead of reading subtitles, viewers hear the content in their native language. The goal is to make the experience feel natural, immersive, and culturally adapted.

Dubbing is commonly used in:

- Films and TV series

- Streaming content

- YouTube videos

- Corporate training modules

- E-learning courses

- Marketing campaigns

It is one of the most powerful forms of video localization.

At its core, dubbing is not just a technical process, it is a storytelling adaptation process. The goal is to take spoken dialogue from one language and recreate it in another language without losing the emotional and narrative integrity of the original content.

Unlike subtitles, which layer translation on top of existing audio, dubbing replaces the original voice entirely. This means the new voice must feel native to the visuals, the characters, and the pacing of the story.

Why Is Video Dubbing Important?

Video dubbing is no longer a “nice-to-have” feature in content strategy. In today’s global content economy, it has become a competitive differentiator.

As audiences consume content across borders, language becomes the final barrier between visibility and true engagement. Subtitles can help bridge the gap, but for many viewers, listening in their native language creates a far more natural and immersive experience.

Dubbing does more than translate dialogue. It transforms how audiences connect with content.

Here’s how video dubbing directly impacts performance and growth:

- Audience Reach: Language determines accessibility. Even where English is common, many viewers prefer consuming content in their native language. Dubbing removes friction and opens content to broader demographics, especially in emerging markets, children’s programming, and corporate training.

- Engagement: When effort decreases, engagement increases. Dubbing allows viewers to focus fully on visuals and emotion instead of reading subtitles. This often leads to better completion rates and stronger emotional connection.

- Watch Time & Retention: Localized audio improves retention and session duration. When content feels native, audiences are less likely to drop off. Higher watch time also improves visibility on algorithm-driven platforms.

- Brand Trust & Credibility: Speaking the audience’s language signals commitment and cultural awareness. Localized voice delivery builds familiarity and strengthens brand perception, especially in education, corporate, and institutional sectors.

- Revenue Expansion: Dubbing unlocks new regional markets and monetization opportunities. By localizing content, companies can expand subscriber bases, advertising reach, and international demand.

- Strategic Advantage at Scale: As content libraries grow, manual localization becomes inefficient. Scalable AI dubbing systems enable consistent, repeatable workflows, turning localization into a sustainable growth engine.

Localization must be structured, repeatable, and efficient. Scalable AI dubbing systems enable organizations to expand globally without rebuilding workflows from scratch.

In the modern content ecosystem, video dubbing is no longer optional. It is a strategic growth lever.

Fundamentals of Video Dubbing

Dubbing is more than replacing one language with another. It is a structured creative process that combines linguistic accuracy, vocal performance, and technical precision. Each stage builds upon the previous one, transforming original dialogue into a version that feels authentic to a new audience.

At its core, successful dubbing rests on three essential pillars: translation, voice performance, and synchronization. Let’s dig into the details of each:

1. Translation

This is the linguistic foundation of dubbing. Dialogue must be converted into the target language while preserving:

- Meaning

- Context

- Tone

- Cultural references

However, effective dubbing is not literal translation. It requires adaptation. Idioms may need rewriting. Humor may need cultural adjustment. Emotional scenes must carry the same intensity.The translation must feel natural, not mechanically converted.

2. Voice Recording

Once the script is adapted, the dialogue must be performed. Traditionally, this involved professional voice actors recording lines in studios under the direction of audio engineers. Today, this stage may involve:

- Studio-recorded voice actors

- High-quality AI-generated voices

- Consent-based voice clones

Regardless of the method, the new voice must:

- Match the character’s age and personality

- Reflect emotional tone

- Maintain consistency across scenes

- Fit the visual performance

Voice performance is where technical translation becomes emotional storytelling.

How traditional voice-over artists work?

3. Synchronization

After recording or generating the new voice, the audio must be synchronized with the original video.

This includes:

- Matching sentence length

- Aligning pauses

- Timing emotional beats

- Adjusting pacing

- In some cases, approximating lip movements

Poor synchronization breaks immersion immediately. Even a slight delay between lip movement and audio can make dubbed content feel artificial. Synchronization transforms translated audio into believable dialogue.

But the real challenge of dubbing goes beyond these three technical steps. It is not just about translating words or recording voices. It is about preserving:

- Tone

- Emotion

- Intent

- Character personality

- Cultural nuance

For example:

A sarcastic line must still sound sarcastic.

A romantic confession must still feel intimate.

A comedic punchline must still land naturally.

When dubbing is done well, viewers should not feel like they are watching translated content. They should feel like the content was originally created in their language. That is the true benchmark of high-quality dubbing.

Types of Video Dubbing

Not all dubbing follows the same format. Different types of dubbing exist because content formats, budgets, audience expectations, and production timelines vary widely. A theatrical film demands a different level of immersion than a corporate training video. A documentary interview requires a different approach than an animated series.

Each dubbing type balances three core factors:

- Immersion

- Cost

- Production complexity

Let’s explore the main types in detail.

Lip-Sync Dubbing

Lip-sync dubbing is the most immersive and technically demanding form of dubbing. In this approach, the translated dialogue is carefully adapted so that it matches the lip movements of the on-screen actors as closely as possible. This often requires script rewriting to ensure syllable count and timing align naturally with mouth movements.

Lip-sync dubbing typically involves:

- Precise script adaptation

- Professional voice actors

- Studio recording sessions

- Frame-level timing adjustments

It is most commonly used in:

- Theatrical films

- High-budget streaming series

- Animated features

- Premium television productions

Because of its complexity, lip-sync dubbing is expensive and time-intensive. However, when executed well, it creates the most seamless and immersive viewing experience. Viewers often forget they are watching dubbed content.

Voice-Over Dubbing

Voice-over dubbing is less immersive but significantly more cost-effective. In this format, the original audio remains faintly audible in the background, while the translated voice plays over it. The new voice typically starts a second or two after the original speaker begins talking.

This method does not attempt to match lip movements. Instead, it prioritizes clarity and informational accuracy.

Voice-over dubbing is commonly used in:

- Documentaries

- Reality TV

- News reports

- Educational content

- Corporate presentations

Because it requires less synchronization effort, voice-over dubbing is faster to produce and more affordable. However, it offers a slightly less immersive experience compared to full lip-sync dubbing.

AI Dubbing

AI dubbing is the most recent evolution in video localization. Instead of relying entirely on studio-based voice actors, AI dubbing uses advanced voice synthesis models to generate translated speech. These voices can be pre-trained synthetic voices, custom brand voices or consent-based voice clones

AI dubbing systems handle:

- Transcription

- Contextual translation

- Voice generation

- Timing alignment

Through this approach the production time is dramatically reduced, along with recording costs and scalability limitations.

AI dubbing is particularly effective for:

- Large content libraries

- YouTube creators expanding globally

- E-learning platforms

- Corporate training programs

- Multi-language streaming releases

While high-budget films may still rely on traditional lip-sync dubbing, AI dubbing has become the dominant solution for scalable digital content.

Each type serves a different purpose within the broader ecosystem of video localization. Understanding these distinctions helps content creators choose the most effective and efficient strategy for reaching global audiences.

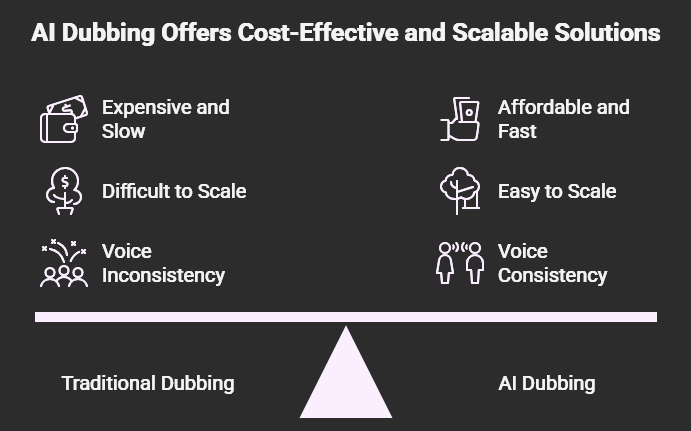

Traditional Dubbing vs AI Dubbing

For decades, traditional dubbing was the standard method for localizing video content. Studios relied on voice actors, directors, and recording facilities to recreate dialogue in other languages, delivering high-quality results for theatrical films and premium television.

However, this model was built for a different era; one with slower production cycles and limited language releases.

Today, streaming platforms launch globally, YouTube creators publish weekly to international audiences, and enterprises manage multilingual training libraries at scale. Localization is no longer occasional. It is continuous.

AI dubbing emerged to meet this demand. By combining speech recognition, contextual translation, and neural voice synthesis, it automates large parts of the workflow. The result is not just a cost reduction. It is a scalable localization model designed for digital-first content ecosystems.

To understand how traditional and AI dubbing differ, here is a structured comparison:

| Factor | Traditional dubbing | AI dubbing |

| Cost | High | Lower at scale |

| Speed | Weeks | Hours a day |

| Scalability | Limited | Global |

| Voice Consistency | Manual | Automated |

| Revision Flexibility | Complex | Easily editable |

| Best For | Cinematic releases | Recurring digital content |

Traditional dubbing continues to serve high-budget theatrical productions where precise lip-sync and performance direction are critical. AI dubbing, on the other hand, has become the dominant solution for digital-first content ecosystems where speed, scalability, and operational consistency are essential.

In modern localization strategies, the decision is no longer about quality versus automation. It is about matching the right dubbing model to the right content format.

Challenges of Dubbing

Despite its many benefits, dubbing is not a simple plug-and-play process. Whether traditional or AI-driven, dubbing introduces operational, creative, and logistical challenges, especially at scale.

As content libraries grow and multilingual releases become the norm, these challenges compound quickly. Without structured workflows and oversight, quality and consistency can suffer.

Here are some of the most common challenges in modern dubbing:

- Voice Consistency: In serialized content, characters must sound the same across episodes and languages. Even small shifts in tone or delivery can break immersion, and maintaining continuity becomes complex as scale increases.

- Terminology Alignment: Names, technical terms, and branded phrases must remain consistent throughout a project. Any drift across episodes or markets can confuse audiences and weaken credibility.

- Emotional Accuracy: Dubbing is about transferring emotion, not just translating words. Humor, sarcasm, and dramatic intensity must be preserved to maintain engagement and authenticity.

- Cost Management: Traditional studio dubbing can be expensive, especially for multi-language or multi-episode releases. Without scalable solutions, costs quickly multiply and limit expansion.

- Multi-Episode Complexity: Dubbing large series across multiple languages requires strict version control, voice tracking, and coordination. Without centralized systems, inconsistencies and fragmentation become inevitable.

- Ethical Voice Usage: AI voice cloning requires clear consent, governance, and controlled usage. Without proper safeguards, organizations risk legal and reputational consequences.

Without structured systems and oversight, large-scale dubbing can become chaotic. As localization expands from occasional projects to continuous global releases, managing these challenges effectively becomes just as important as the dubbing process itself.

The Complexity of Series-Based Dubbing

Dubbing one video is manageable.

Dubbing a 40-episode series in 10 languages is not.

As scale increases, challenges multiply. Teams must manage voice tracking, maintain cross-episode continuity, coordinate multiple reviewers, handle batch processing, and maintain strict version control.

Without structured workflows, small inconsistencies can quickly grow into larger problems. Most generic tools treat videos as standalone tasks.

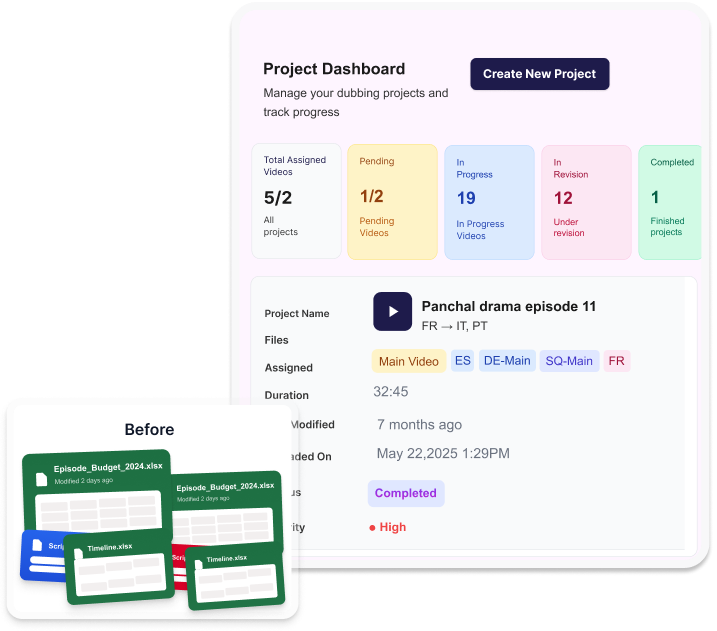

Serialized content requires series-level management. And that’s where Echo9 comes in.

How Echo9 Solves Series-Based Dubbing Challenges

Echo9 is designed for structured, repeatable video localization, not one-off dubbing tasks. While many AI tools treat each video separately, Echo9 is built around Series Management, making it ideal for long-form and serialized content.

With Episodic Content Management, entire seasons, course modules, or multi-episode projects are organized into structured, trackable collections from the start. Instead of managing episodes individually, teams begin with Project Creation, where the full series framework is defined, including voice strategy, terminology rules, and stylistic direction.

Once the structure is in place, Task Assigning ensures every episode, language, and review stage is clearly allocated to the right contributors. This removes manual coordination, reduces version confusion, and prevents creative drift across episodes.

What is established in Episode 1; character voice, tone, naming conventions, translation standards, seamlessly carries through Episode 10 and beyond. Voice Assignment guarantees characters retain the same voice across languages and episodes. Terminology Control keeps names, technical terms, and key phrases aligned across the entire series. Integrated subtitle and dubbing workflows eliminate fragmentation between teams. Built-in QA processes verify timing, tone, and translation accuracy before release.

With support for 100+ languages, Echo9 turns AI dubbing into a scalable system.

For platforms and enterprises managing large libraries, this structured approach preserves consistency and narrative integrity at scale.

Final Thoughts

Video dubbing has evolved from studio-bound production to AI-powered scalability built for global content distribution. What was once limited to major films is now essential for streaming platforms, educators, enterprises, and creators expanding internationally.

Dubbing enables global reach, stronger engagement, improved brand connection, and faster entry into new markets. By delivering content in native languages, organizations increase retention and unlock new revenue opportunities.

However, scale without structure creates inconsistency. Modern AI dubbing platforms like Echo9 combine automation with series-level management to maintain quality at scale.

In today’s content ecosystem, video dubbing is no longer optional. It is a growth strategy.

FAQs

How many languages does Echo9 support?

Echo9 supports dubbing in 100+ languages, making it suitable for truly global content distribution.

Can Echo9 handle an entire series, not just single videos?

Yes, and that’s exactly what sets Echo9 apart. It’s built around Series Management, allowing teams to maintain voice consistency, terminology alignment, and translation standards across every episode of a series.

What happens to voice consistency across multiple episodes?

Echo9’s Voice Assignment feature ensures each character retains the same voice across all episodes and languages. Terminology Control keeps names, branded phrases, and technical terms consistent throughout the entire project.

Is AI dubbing suitable for TV series?

Yes, AI dubbing is increasingly suitable for TV series and episodic content. When combined with structured Series Management, it ensures voice consistency and terminology alignment across episodes. This prevents drift in character identity and tone. It is particularly effective for multi-season projects that require scale and repeatability.

Who is Echo9 best suited for?

Echo9 is ideal for streaming platforms, e-learning providers, YouTube creators, enterprises managing training libraries, and any organization that needs to localize content at scale, consistently and efficiently.

One Response